- 7 Posts

- 72 Comments

I wrote this ansible role to setup dovecot IMAP server. Once a year I move all mail from the previous year from various mailboxes to my dovecot server (using thunderbird).

I wrote my own ansible role to deploy/maintain a matrix server and a few goodies (element/synapse-admin). If you’re not using ansible you should still be able to understand the deployment logic by starting at tasks/main.yml and following includes/tasks from there.

libvirt/virt-manager is a nice VM management tool.

Their cheap 1-6€/month VPS offers are actually fine. Not much to say about it, it just works.

https://awesome-selfhosted.net/ is hosted on a Ionos VPS.

What’s your existing setup? For such a simple task, check if any of the tools you use currently can be adapted (simple text files on a web server? File sharing like Nextcloud and text files? Pastebin-like? Wiki? …). Otherwise a simple Shaarli instance could do the trick (just post “notes” aka. bookmarks without an URL). I use this theme to make it nicer. Or maybe a static site generator/blog.

Windows Servers

No

setup automatic responses to the alerts

It should be possible using script to execute on alarm = /your/custom/remediation-script https://learn.netdata.cloud/docs/alerts-&-notifications/notifications/agent-dispatched-notifications/agent-notifications-reference. I have not experimented with this yet, but soon will (implementing a custom notification channel for specific alarms)

restarting a service if it isn’t answering requests

I’d rather find the root cause of the downtime/malfunction instead of blindly restarting the service, just my 2 cents.

I use netdata (the FOSS agent only, not the cloud offering) on all my servers (physical, VMs…) and stream all metrics to a parent netdata instance. It works extremely well for me.

Other solutions are too cumbersome and heavy on maintenance for me. You can query netdata from prometheus/grafana [1] if you really need custom dashboards.

I guess you wouldn’t be able to install it on the router/switch but there is a SNMP collector which should be able to query bandwidth info from the network appliances.

Netdata can also expose metrics to prometheus which you can then use in Grafana for more advanced/customizable dashboards https://learn.netdata.cloud/docs/exporting-metrics/prometheus

English

English- •

- etherarp.net

- •

- 5M

- •

https://github.com/chriswayg/ansible-msmtp-mailer/issues/14 While msmtp has features to alter the envelope sender and recipient, it doesn’t alter the “To:” or “From:” message itself. When the Envelope doesn’t match these details, it can be considered spam

Oh I didn’t know that, good to know!

The proposed one-line wrapper looks like a nice solution

You can definitely replace senders with correct mail addresses for relaying through SMTP servers that expect them (this is what I do):

# /etc/msmtprc

account default

...

host smtp.gmail.com

auto_from on

auth on

user myaddress

password hunter2

# Replace local recipients with addresses in the aliases file

aliases /etc/aliases

# /etc/aliases

mailer-daemon: postmaster

postmaster: root

nobody: root

hostmaster: root

usenet: root

news: root

webmaster: root

www: root

ftp: root

abuse: root

noc: root

security: root

root: default

www-data: root

default: myaddress@gmail.com

(the only thing I changed from the defaults in the aliases file is adding the last line)

This makes it so all/most system accounts susceptible to send mail are aliased to root, and root in turn is aliased to my email address (which is the one configured in host/user/password in msmtprc)

Edit: I think it’s actually the auto_from option which interests you. Check the msmtp manpage

If this is a “shared hosting” type of server (LAMP stack), you can usually run PHP applications (assuming they are pre-packaged and don’t need composer install or similar during the install process). Check https://awesome-selfhosted.net/platforms/php.html

I think Peertube would be overkill for a single channel, but it’s the closest to YouTube in terms of features (multiple formats/transcoding, comments, etc). Otherwise I would just rip the channel with yt-dlp and setup a “mirror” on something simple like a static site or blog. Find something that works, then automate (a simple shell script + cron job would do the trick).

On my desktop I do this with quodlibet alongside the KDE connect applet + KDE connect android app, which lets the phone control media players on the desktop. You probably don’t want to run a full desktop environment just for this, but it’s a good option if you already have a desktop PC with decent speakers.

Mentioning it just in case, because it works for me. If you’re looking for a purely headless server there are other good suggestions in this thread.

Syslog over TCP with TLS (don’t want those sweet packets containing sensitive data leaving your box unencrypted). Bonus points for mutual authentication between the server/clients (just got it working and it’s 👌 - my implementation here

It solves the aggregation part but doesn’t solve the viewing/analysis part. I usually use lnav on simple setups (gotty as a poor man’s web interface for lnav when needed), and graylog on larger ones (definitely costly in terms of RAM and storage though)

“buggy as fuck” because there’s a bug that makes it so you can’t easily run it if your locate is different than English?

It sends pretty bad signals when it causes a crash on the first lxd init (sure I could make the case that there are workarounds, switch locales, create the bridge, but it doesn’t help make it appear as a better solution than proxmox). Whatever you call it, it’s a bad looking bug, and the fact that it was not patched in debian stable or backports makes me think there might be further hacks needed down the road for other stupid bugs like this one, so for now, hard pass on the Debian package (might file a bug on the bts later).

About the link, Proxmox kernel is based on Ubuntu, not Debian…

Thanks for the link mate, Proxmox kernels are based on Ubuntu’s, which are in turn based on Debian’s, not arguing about that - but I was specifically referring to this comment

having to wait months for fixes already available upstream or so they would fix their own shit

any example/link to bug reports for such fixes not being applied to proxmox kernels? Asking so I can raise an orange flag before it gets adopted without due consideration.

DO NOT migrate / upgrade anything to the snap package

It was already in place when I came in (made me roll my eyes), and it’s a mess. As you said, there’s no proper upgrade path to anything else. So anyway…

you should migrate into LXD LTS from Debian 12 repositories

The LXD version in Debian 12 is buggy as fuck, this patch has not even been backported https://github.com/canonical/lxd/issues/11902 and 5.0.2-5 is still affected. It was a dealbreaker in my previous tests, and doesn’t inspire confidence in the bug testing and patching process on this particular package. On top of it, It will be hard to convice other guys that we should ditch Ubuntu and their shenanigans, and that we should migrate to good old Debian (especially if the lxd package is in such a state). Some parts of the job are cool, but I’m starting to see there’s strong resistance to change, so as I said, path of least resistance.

Do you have any links/info about the way in which Proxmox kernels/packages differ from Debian stable?

The migration is bound to happen in the next few months, and I can’t recommend moving to incus yet since it’s not in stable/LTS repositories for Debian/Ubuntu, and I really don’t want to encourage adding third-party repositories to the mix - they are already widespread in the setup I inherited (new gig), and part of a major clusterfuck that is upgrade management (or the lack of). I really want to standardize on official distro repositories. On the other hand the current LXD packages are provided by snap (…) so that would still be an improvement, I guess.

Management is already sold to the idea of Proxmox (not by me), so I think I’ll take the path of least resistance. I’ve had mostly good experiences with it in the past, even if I found their custom kernels a bit strange to start with… do you have any links/info about the way in which Proxmox kernels/packages differ from Debian stable? I’d still like to put a word of caution about that.

/thread

This is my go-to setup.

I try to stick with libvirt/virsh when I don’t need any graphical interface (integrates beautifully with ansible [1]), or when I don’t need clustering/HA (libvirt does support “clustering” at least in some capability, you can live migrate VMs between hosts, manage remote hypervisors from virsh/virt-manager, etc). On development/lab desktops I bolt virt-manager on top so I have the exact same setup as my production setup, with a nice added GUI. I heard that cockpit could be used as a web interface but have never tried it.

Proxmox on more complex setups (I try to manage it using ansible/the API as much as possible, but the web UI is a nice touch for one-shot operations).

Re incus: I don’t know for sure yet. I have an old LXD setup at work that I’d like to migrate to something else, but I figured that since both libvirt and proxmox support management of LXC containers, I might as well consolidate and use one of these instead.

I see, agree with you that it should be supported by the terraform provider if it is at the VM .conf level… maybe a new attribute in https://registry.terraform.io/providers/Telmate/proxmox/latest/docs/resources/vm_qemu#smbios-block? I would start by requesting this feature in https://github.com/Telmate/terraform-provider-proxmox/issues, and maybe try to add it yourself? (scratch your own itch, fix it for everyone in the process). Good luck

I would have liked for this to be possible directly through Terraform

Is it this proxmox provider? It does allow specifying cloud-init settings: https://registry.terraform.io/providers/Telmate/proxmox/latest/docs/resources/cloud_init_disk. So you can use runcmd or similar to do whatever is needed inside the host to enable Intel SGX, during the terraform provisioning step.

AppArmour support for VMs, which is a secure enclave too (if I understand correctly).

Nope, Apparmor is a Mandatory Access Control (MAC)) framework [1], similar to SELinux. It complements traditional Linux permissions (DAC, Discretionary Access Control). Apparmor is already enabled by default on Debian derivatives/Ubuntu.

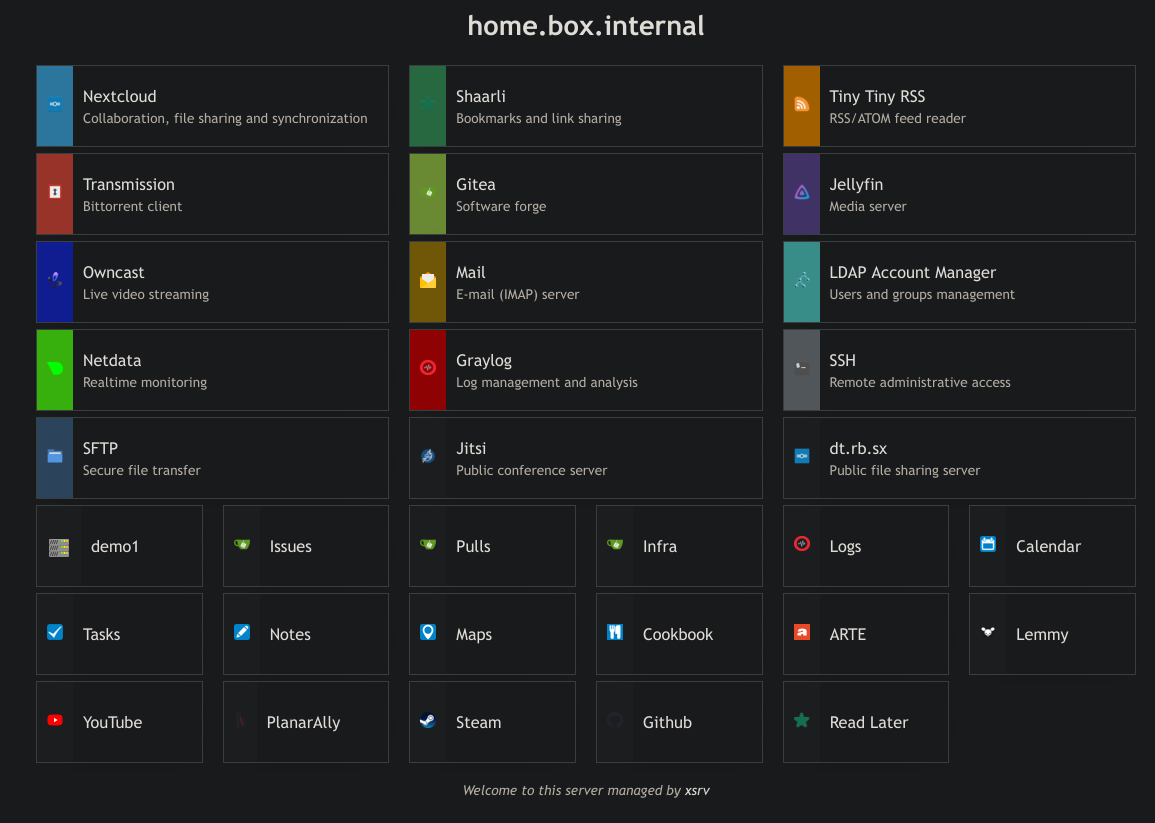

You can probably use it by templating out https://github.com/nodiscc/xsrv/blob/master/roles/homepage/templates/index.html.j2 manually or using jinja2. basically remove the {% ...%} markers and replace {{ ... }} blocks with your own text/links.

You will need a copy of the res directory alongside index.html (images, stylesheet).

You can duplicate col-1-3 mobile-col-1-1 and col-1-6 mobile-col-1-2 and divs as many times as you like and they will arrange themselves on the page, responsively.

But yeah this is actually made with ansible/integration with my roles in mind.

So much server-side code :/ I wrote my own in pure HTML/CSS which gets rebuilt by ansible depending on services installed on the host. Basic YAML config for custom links/title/message.

Next “big” change would be a dark theme, but I get by with Dark Reader which I need for other sites anyway. I think it looks ok

7 daily backups, 4 weekly backups, 6 monthly backups (incremental, using rsnapshot). The latest weekly backup is also copied to an offline/offsite drive.

Netdata (agent only/not the cloud-based features), and a bunch of scanners running from cron/systemd timers, rsyslog for logs (and graylog for larger setups)

My base ansible role for monitoring.

Since your question is also related to securing your setup, inspect and harden the configuration of all running services and the OS itself. Here is my common ansible role for basic stuff. Find (prefereably official) hardening guides for your distribution and implement hardening guidelines such as DISA STIG, CIS benchmarks, ANSSI guides, etc.

I’m curious why you’re not running your own CA since that seems to be a more seamless process than having to deal with ugly SSL errors for every website

It’s not, it’s another service to deploy, maintain, monitor, backup and troubleshoot. The ugly SSL warning only appears once, I check the certificate fingerprint and bypass the warning, from there it’s smooth sailing. The certificate is pinned, so if it ever changes I would get a new warning and would know something shady is going on.

every time you rotate the certificate.

I don’t really rotate these certs, they have a validity of several years.

I’m wondering about different the process is between running an ACME server and another daemon/process like certbot to pull certificates from it, vs writing an ansible playbook/simple shell script to automate the rotation of server certificates.

- Generating self-signed certs is ~40 lines of clean ansible [1], 2 lines of apache config, and one click to get through the self-signed cert warning, once.

- Obtaining Let’s Encrypt certs is 2 lines of apache config with

mod_mdand the HTTP-01 challenge. But it requires a domain name in the public DNS, and port forwarding. - Obtaining certs from a custom ACME CA is 3 lines of apache config (the extra line is to change the ACME endpoint) and a 100k LOC ACME server daemon running somewhere with its own bugs, documentation, deployment and upgrade management tooling, config quirks… and you still have to manage certs for this service. It may be worth it if you have a lot of clients who don’t want to see the self-signed cert warning and/or worry about their private keys being compromised and thus needing to rotate the certs frequently (you still need to protect the CA key…)

likely never going to purchase Apple products since I recognise how much they lock down their device

hear hear

there are not that many android devices in the US with custom ROM support. With that said, I do plan to root all of my Android devices when KernelSU mature

I bought a cheap refurbished Samsung, installed LineageOS on it (Europe, but I don’t see why it wouldn’t work in the US?), without root - I don’t really need root, it’s a security liability, and I think the last time I tried Magisk it didn’t work. The only downside is that I have to manually tap Update for F-Droid updates to run (fully unattended requires root).

I’m currently reading up on how to insert a root and client certificate into Android’s certificate store, but I think it’s definitely possible.

I did it on that LineageOS phone, using adb push, can’t remember how exactly (did it require root? I don’t know). It works but you get a permanent warning in your notifications telling you that The network might be monitored or something. But some apps would still ignore it.

I’m not using a private CA for my internal services, just plain self-signed certs. But if I had to, I would probably go as simple as possible in the first time: generate the CA cert using ansible, use ansible to automate signing of all my certs by the CA cert. The openssl_* modules make this easy enough. This is not very different from my current self-signed setup, the benefit is that I’d only have to trust a single CA certificate/bypass a single certificate warning, instead of getting a warning for every single certificate/domain.

If I wanted to rotate certificates frequently, I’d look into setting up an ACME server like [1], and point mod_md or certbot to it, instead of the default letsencrypt endpoint.

This still does not solve the problem of how to get your clients to trust your private CA. There are dozens of different mechanisms to get a certificate into the trust store. On Linux machines this is easy enough (add the CA cert to /usr/local/share/ca-certificates/*.crt, run update-ca-certificates), but other operating systems use different methods (ever tried adding a custom CA cert on Android? it’s painful. Do other OS even allow it?). Then some apps (Web browsers for example) use their own CA cert store, which is different from the OS… What about clients you don’t have admin access to? etc.

So for simplicity’s sake, if I really wanted valid certs for my internal services, I’d use subdomains of an actual, purchased (more like renting…) domain name (e.g. service-name.internal.example.org), and get the certs from Let’s Encrypt (using DNS challenge, or HTTP challenge on a public-facing server and sync the certificates to the actual servers that needs them). It’s not ideal, but still better than the certificate racket system we had before Let’s Encrypt.

English

English- •

- element.io

- •

- 10M

- •

English

English- •

- 1Y

- •

English

English- •

- scotthelme.co.uk

- •

- 1Y

- •

https://goaccess.io/